9 product prioritization frameworks for product managers

Tempo Team

Key Takeaways

No single decision-making framework beats all others – the right one depends on what data you have, who needs to be involved, and what kind of decision you're making.

RICE and Value vs. Effort are the two most practical starting points for teams that want quantitative ranking without heavy setup.

Frameworks like the Kano model and Buy a Feature bring customers into the process directly, which is useful when internal opinion dominates roadmap discussions.

Good prioritization makes tradeoffs visible – it doesn't produce a perfect answer, but it gives teams a shared basis for the conversation.

Product prioritization sounds simple: rank your features, work on the important ones first.

In practice, you're juggling competing opinions from stakeholders, customer requests that contradict each other, and dev teams with strong views on what's actually buildable. Narrowing a backlog for a sprint or a roadmap is one of the harder parts of a product manager's job.

Another challenge is knowing how many people – team members, stakeholders, customers – should weigh in, and how much their input should actually influence which features, tasks, and updates make the cut.

"The most popular feature and the second most popular feature don't necessarily belong together in the same product. You've got to have a deliberate strategy where you go: We have a particular type of customer that we're trying to serve and we are trying to solve their biggest problems in a way that makes us money. That's a complex problem of finding the overlap in multiple different areas, not to mention things that a team can reasonably do technologically." – Bruce McCarthy, Product Manager and author of Roadmaps Relaunched

Good product prioritization frameworks give you a way to quiet the loudest voice in the room. They replace gut-feel debates with quantitative rankings, charts, and matrices tied directly to customer feedback and product strategy.

Tempo Strategic Roadmaps has two of these frameworks built in: RICE and Value vs. Effort. This guide covers those two in detail, plus seven more:

Kano model

Story mapping

The MoSCoW method

Opportunity scoring

Product tree

Cost of Delay

Buy a Feature

1. RICE

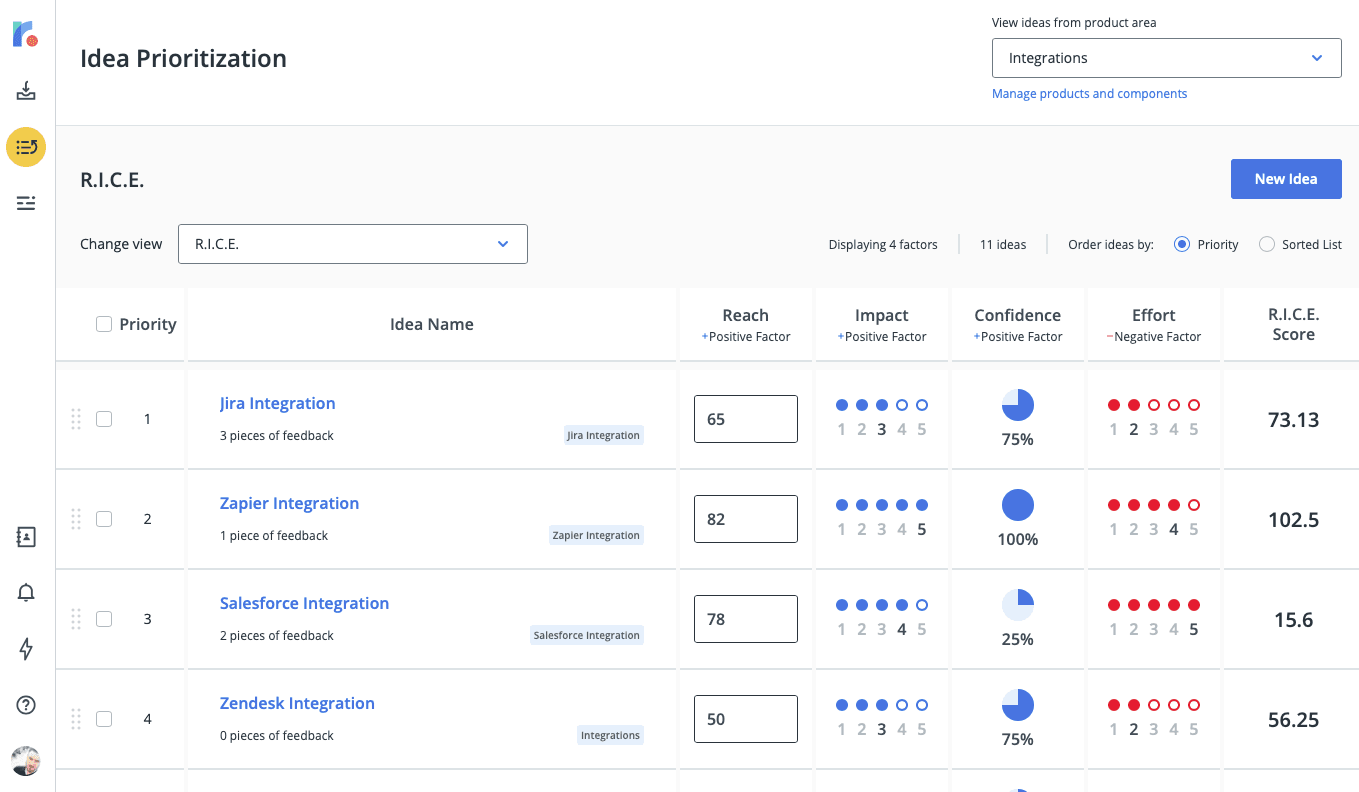

Known as Intercom's internal scoring system for prioritizing ideas, RICE lets product teams focus on the initiatives most likely to move a specific goal forward.

The method evaluates each idea on four factors: reach, impact, confidence, and effort. Here's what each factor means and how to quantify it:

Those individual numbers feed into one formula, giving teams a standardized score they can apply across any type of initiative on the roadmap:

After running each feature through this calculation, you get a final RICE score you can use to rank order. Here's an example:

Pros of the RICE method

It requires teams to make their product metrics SMART – specific, measurable, attainable, relevant, and time-based – before they score anything.

It reduces the pull of inherent biases by adding a confidence dimension. Instead of debating "how much is this feature worth," the conversation becomes "how confident are we in each of these estimates?" That's a more productive discussion.

Cons of the RICE method

RICE scores don't account for dependencies. A feature with a high score sometimes needs to wait for something else – so treat the score as an input, not a final verdict.

Estimations will never be fully accurate. RICE is an exercise in structured estimation, not prediction.

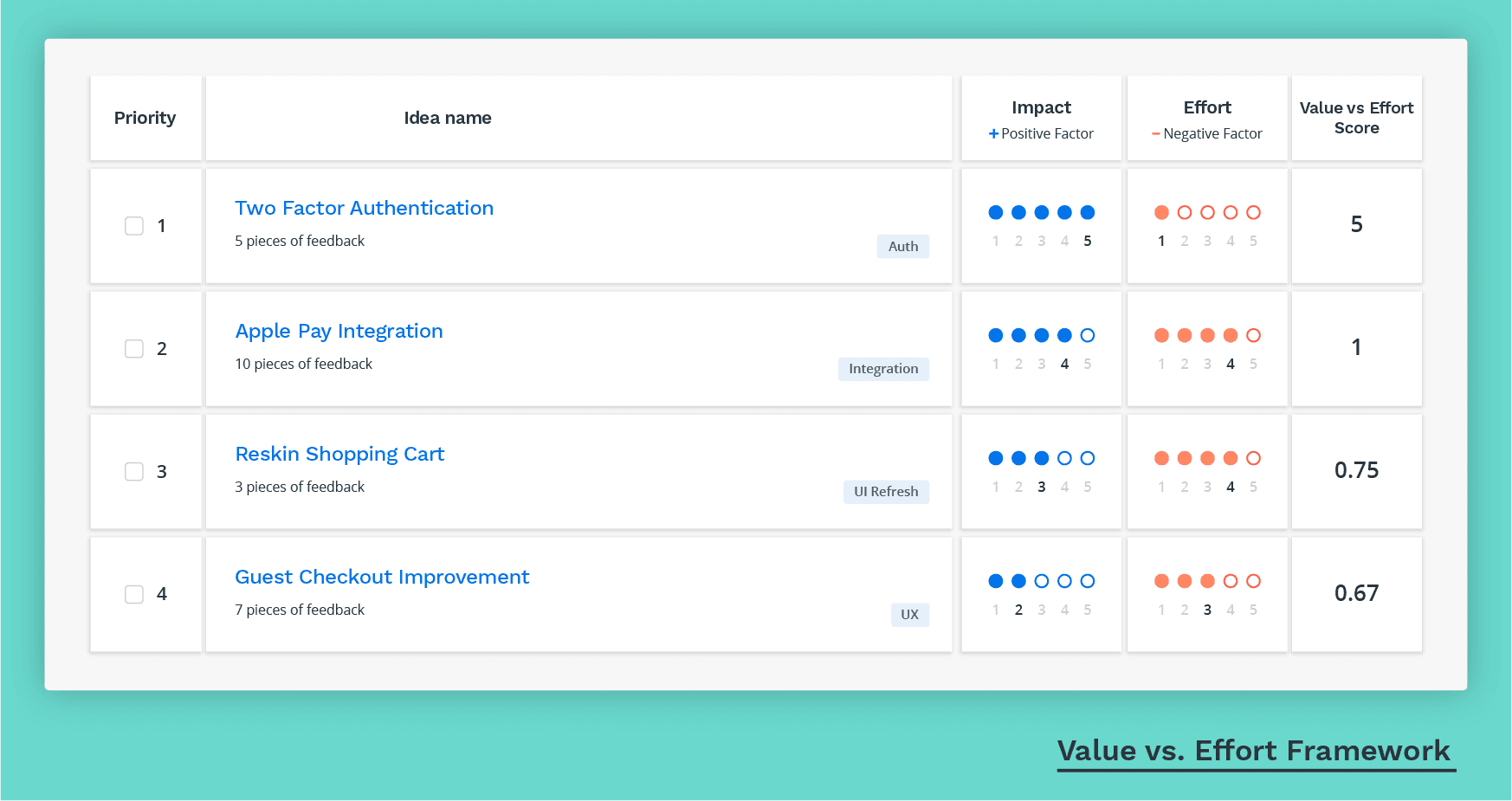

2. Value vs. Effort

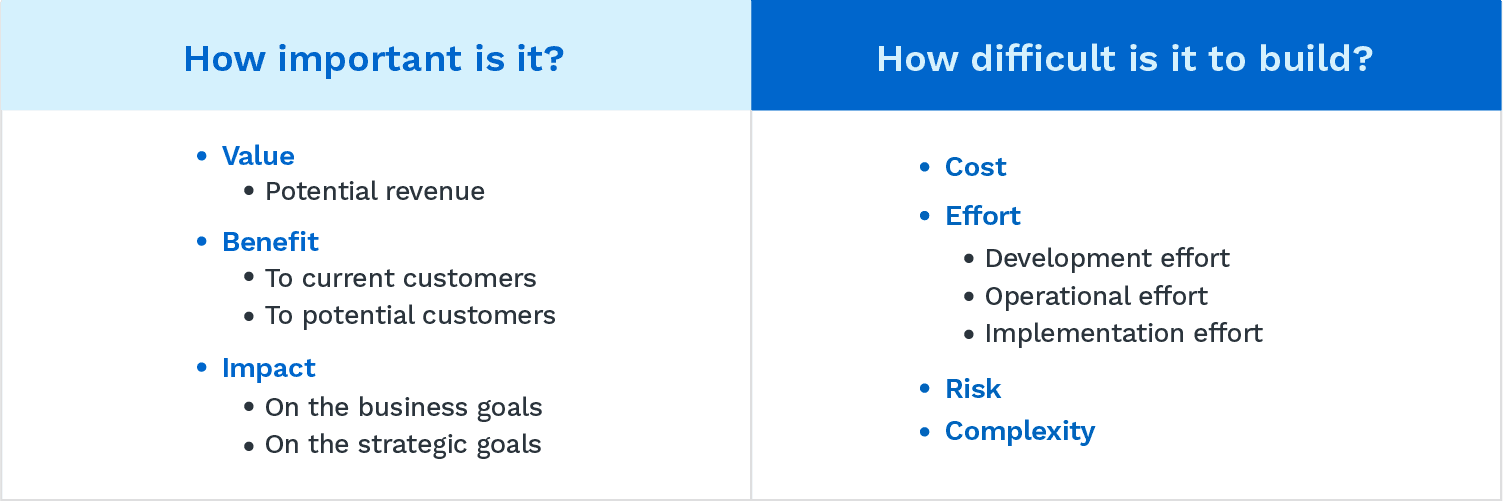

This approach takes your list of features and assigns two scores to each: value and effort. The goal is a quick, visual way to see which ideas are worth pursuing now and which aren't.

The scores are estimates. A lot of judgment goes into answering the core questions: Will this push our goals forward if we build it? Can we actually build it with what we have?

Value vs. Effort also creates space for the disagreements that should happen. When stakeholders argue over how to score a feature, they're working through the strategic alignment gaps that need to be resolved anyway. Here's what a Value vs. Effort scorecard looks like in Strategic Roadmaps:

Value vs. Effort is one of two built-in prioritization templates available in Strategic Roadmaps. You can also create custom prioritization criteria using any factors relevant to your organization.

Pros of Value vs. Effort

What counts as "value" or "effort" is flexible. For some teams, effort means development time; for others, it's total implementation cost. That flexibility makes this framework usable across industries and product types.

It's a practical alignment tool. Forcing teams to put numbers on features surfaces assumptions and gets people on the same page about what actually matters.

In resource-constrained environments, a Value vs. Effort analysis focuses attention on the highest-impact work without requiring complex models.

It's easy to run. No formulas, no special software – just an agreed-upon scoring scale and a shared document.

Cons of Value vs. Effort

It's largely an estimation exercise, which leaves room for cognitive bias. Final scores can be inflated or underweighted depending on who's in the room.

Getting product and dev teams to agree on scores can take time, particularly when there are strong opinions in both directions.

It gets harder to use as team size grows. With multiple product lines and product teams, scoring consistency breaks down.

3. Kano model

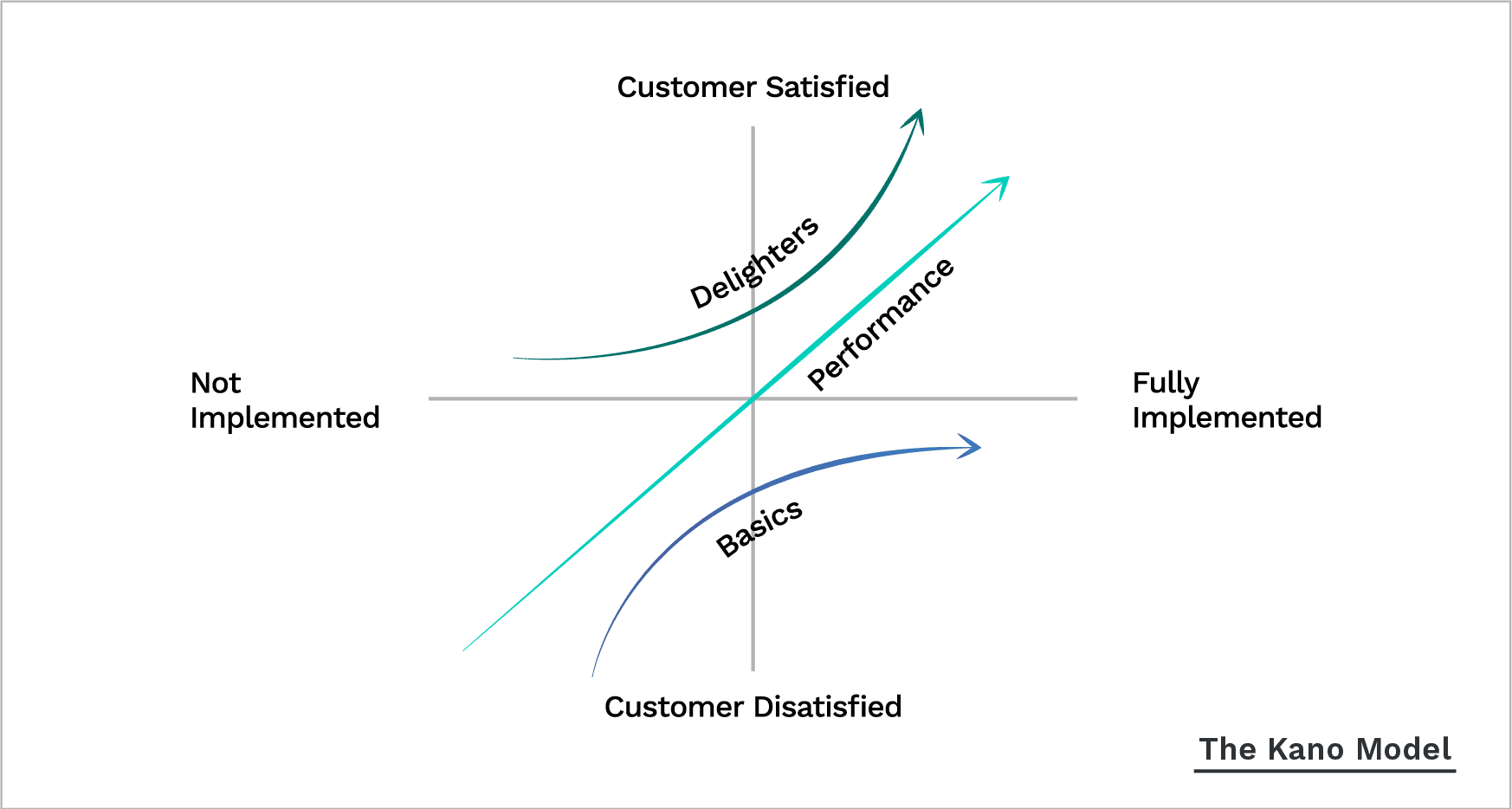

The Kano model plots two dimensions against each other: how fully a customer need is met (implementation) and how satisfied customers are as a result. It splits features into three buckets:

Must-haves: Features customers expect as a baseline. Without them, the product isn't viable.

Performance features: The more you invest here, the higher customer satisfaction climbs.

Delighters: Surprises that customers didn't ask for but respond to with genuine enthusiasm when you deliver them.

You gather this data through a Kano questionnaire, asking customers how they'd feel both with and without each feature. The core idea: The more you invest in a bucket, the happier customers get – but the Kano model shows you which bucket to invest in first, which is where most teams get it wrong.

Pros of the Kano model

The questionnaire prevents teams from over-investing in excitement features while neglecting the must-haves that keep customers from churning.

It produces better market predictions for which features will land, and with whom.

Cons of the Kano model

Running a statistically meaningful Kano survey takes time. You need enough responses to be representative of your full customer base.

Customers may not understand the features you're asking them to evaluate, which skews the results.

4. Story mapping

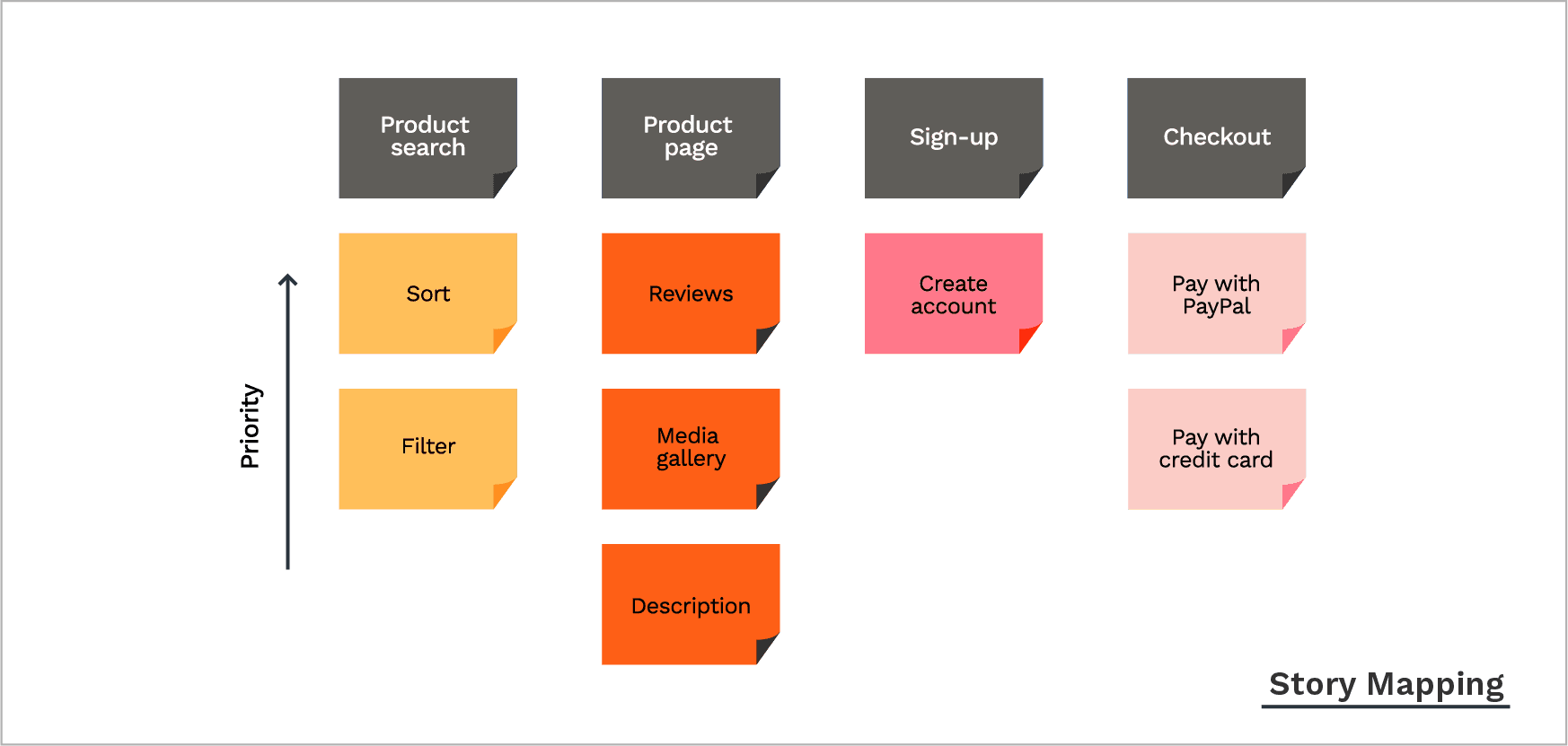

Story mapping is a visual technique that lays out each step of the user journey on a two-dimensional map, helping teams identify the must-have tasks for every release. It keeps the focus on the user's actual experience rather than the internal opinions of the team.

Along a horizontal axis, you create sequential buckets representing each stage of the user journey – from signing up, to setting up a profile, to using specific features. Along a vertical axis, you rank those tasks by importance, top to bottom. Work at the bottom can be labeled "backlog" for items you're deferring. A horizontal line across the map divides stories into releases and sprints.

Pros of story mapping

It's one of the fastest ways to identify your MVP.

It centers user experience. You're writing user stories, not internal requirements.

It's collaborative – story mapping is a group activity that works across functions.

Cons of story mapping

It doesn't account for external prioritization factors like business value or technical complexity.

5. The MoSCoW method

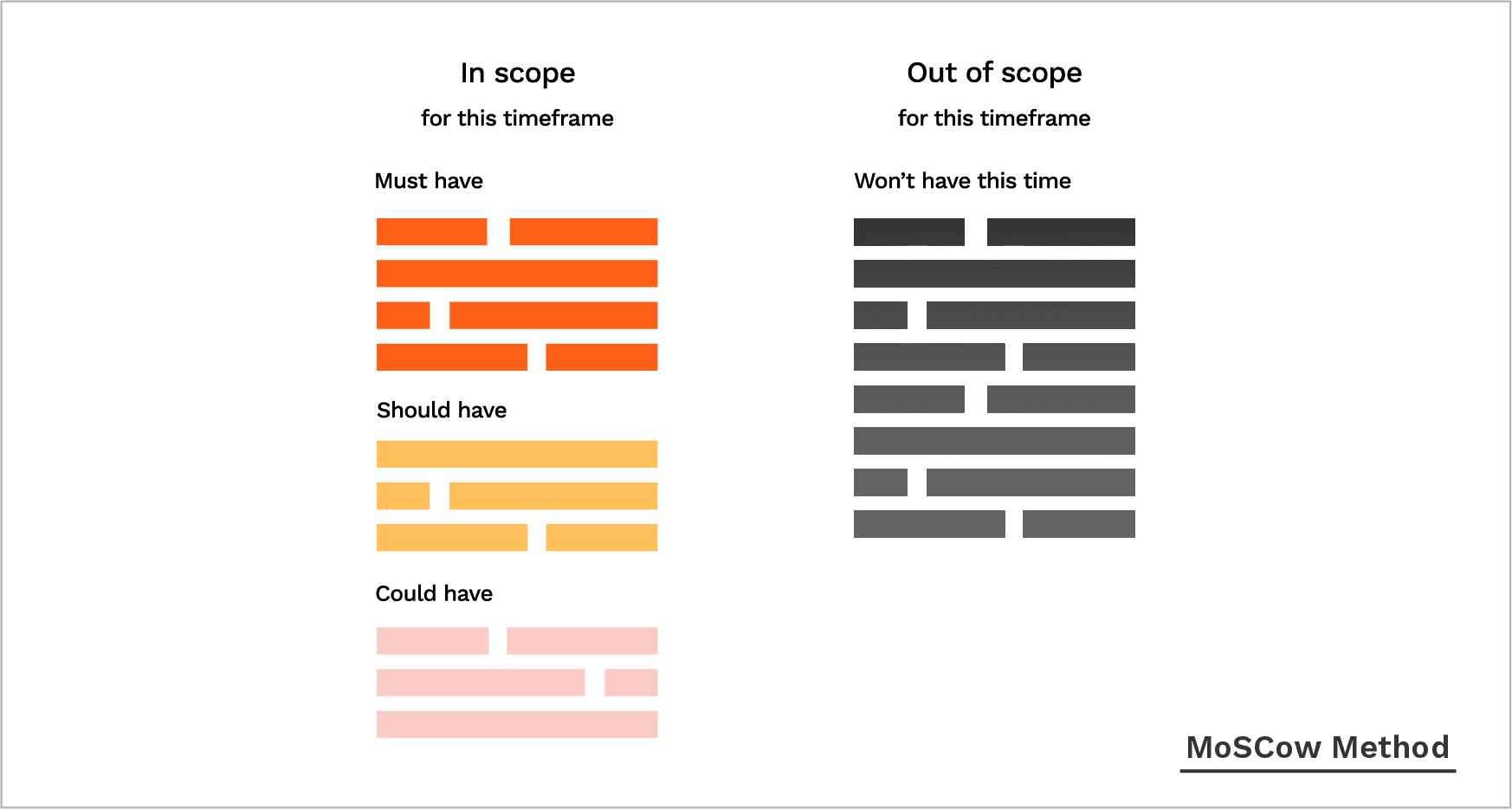

The MoSCoW method sorts features into four priority buckets, giving teams and stakeholders a shared vocabulary for what matters most right now. MoSCoW – the Os are added to make the acronym memorable, not a reference to the city – stands for Must-Have, Should-Have, Could-Have, and Won't-Have.

Must-Have: Non-negotiable requirements. If any of these are missing at launch, the product can't ship. Example: "Users MUST log in to access their account."

Should-Have: Important but not time-sensitive. These belong in the next release, not necessarily this one. Example: "Users SHOULD have an option to reset their password."

Could-Have: Bonuses that would improve satisfaction but won't break anything if they're absent. Example: "Users COULD save their work directly to the cloud from our app."

Won't-Have: The lowest-priority items, and the first to go under resource constraints. These are candidates for future releases.

The model is dynamic – a Won't-Have today can become a Must-Have once the product matures or the market shifts.

Pros of the MoSCoW method

It works well with non-technical stakeholders who need to participate in prioritization without drowning in scoring models.

It's fast to run and easy to explain.

It naturally prompts teams to think about resource allocation when categorizing features.

Cons of the MoSCoW method

Teams consistently overestimate the number of Must-Haves. If everything is critical, nothing is.

It's better at defining release criteria than ranking features within a release.

6. Opportunity scoring

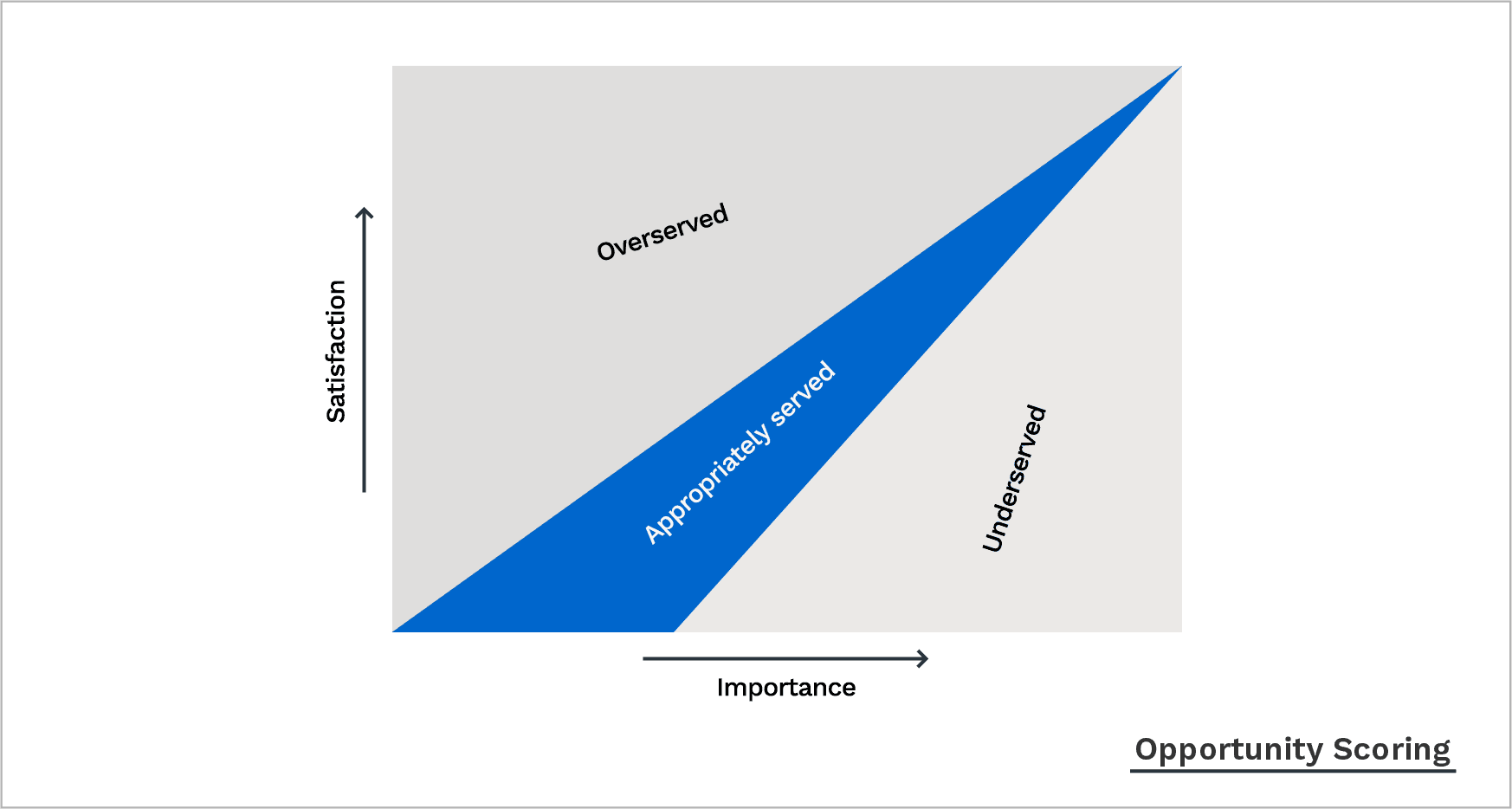

Opportunity scoring ranks potential features by comparing how important each outcome is to customers against how satisfied they currently are with existing solutions. The method comes from Anthony Ulwick's Outcome-Driven Innovation framework, which argues that customers buy products to get certain jobs done – and while customers struggle to generate solutions, their feedback on desired outcomes is genuinely useful.

The process: develop a list of ideal outcomes, then survey customers on two questions:

How important is this outcome or feature?

How satisfied are you with current solutions?

Plotting responses on a satisfaction-vs-importance graph reveals the features customers care about most but are poorly served by today – those are your next priorities.

Pros of opportunity scoring

Fast identification of the gaps where innovation will actually land.

Easy to visualize and act on.

Cons of opportunity scoring

Survey respondents tend to over- or understate feature importance, which can skew the graph.

7. The product tree

Developed by Bruce Hollman, the product tree – sometimes called pruning the product tree – is a collaborative workshop game designed to shape the product around the customer outcomes that generate the most value. The goal is to keep good product backlog items from getting buried.

Here's how it works:

Draw a large tree with several branches on a whiteboard or paper. The trunk represents features already in the product, the outermost branches represent features planned for the next release, and inner branches represent features not yet on the roadmap.

Ask participants – ideally customers – to write potential features on sticky notes (the leaves).

Ask them to place their leaves on the branch where they think the feature belongs.

Clusters tell you where customers want investment. Sparse branches signal lower-priority areas.

Pros of the product tree framework

Gives a visual read on how balanced your feature set is across product areas.

Pulls insight directly from customers without the rigidity of a formal survey.

Cons of the product tree framework

Produces no quantitative ranking – just a visual guide. Product managers have to translate the output into actual priorities.

Without clear groupings, the exercise can run long.

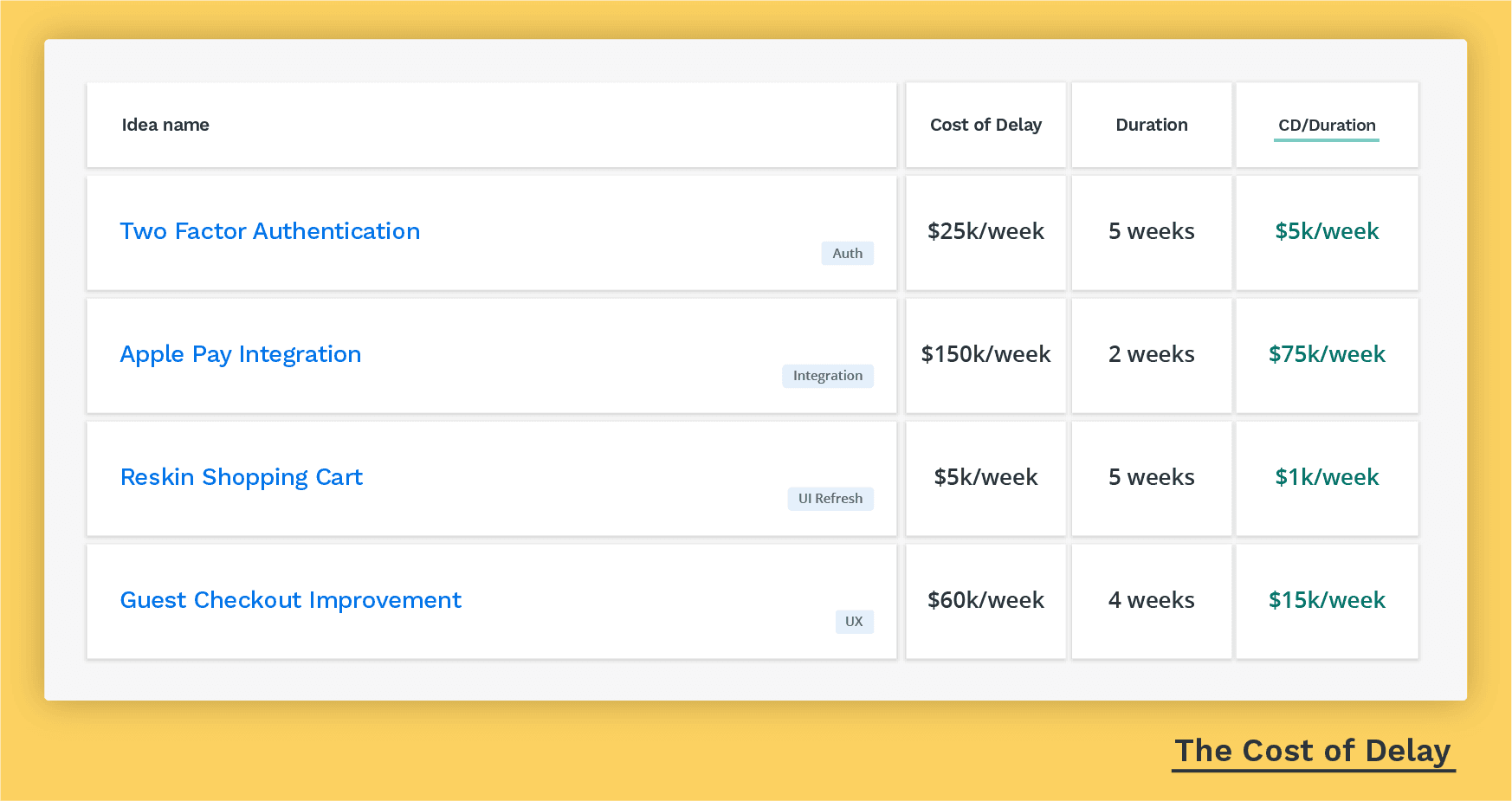

8. Cost of Delay

Joshua Arnold defined Cost of Delay as "a way to communicate the impact of time on the outcomes [the company wishes] to achieve." The method asks three questions about every feature:

What would this be worth if we had it right now?

How much more would it be worth if we delivered it earlier?

How much does it cost us for every week we wait?

You assign a monetary value by calculating the time and team effort required to build each feature, and the incremental value it creates once live.

Example: Feature A costs $30,000 per week delayed and takes three months to build. Feature B costs $10,000 per week delayed and takes the same time. Feature A goes first.

Pros of the Cost of Delay method

Puts a dollar figure on backlog decisions, making the stakes concrete.

Shifts the team's mindset from "what does this cost to build" to "what does it cost us not to ship this sooner."

Better decisions get made when value and speed both factor in.

Cons of the Cost of Delay method

Assigning monetary value to a feature is partly gut-feel, which creates room for internal disagreement on the numbers.

9. Buy a Feature

Buy a Feature is an innovation game that can involve customers, internal stakeholders, or both. With customers, it gives you a quantifiable read on how much any given feature is worth to the people who'll actually use it.

The game works like this:

Choose a list of features, ideas, or updates and assign a monetary value to each – based on how much time, money, and effort each feature will cost the team.

Gather a group of up to eight customers (or internal team members) and give each person a set amount of money to "spend."

Ask participants to buy the features they want. Some will put everything on one feature they care about; others will spread it around. Ask them to explain their choices.

Reorder the feature list by total money spent.

A useful mechanic: price some features high enough that no individual can afford them alone. This forces participants to negotiate and pool their money, which reveals shared priorities.

Pros of the Buy a Feature method

If you want customer input without a dry questionnaire, this is more engaging. It forces people to rationalize their priorities with real (simulated) tradeoffs.

Replaces abstract feature rankings with concrete decisions.

Cons of the Buy a Feature method

Only works for features already on your roadmap. It tells you what customers value most, not what they want that you haven't thought of yet.

Getting a group of customers in one place at the same time requires coordination.

Which framework should you use?

None of these frameworks replace judgment. They're tools for getting a room full of people – who all have different opinions – to agree on what matters most right now. The point isn't a perfect ranking. It's making tradeoffs visible so you can have a real conversation about them.

Teams sometimes use other models too, including weighted scoring prioritization and the ICE scoring model. You can also combine frameworks: Value vs. Effort for an initial triage, then RICE on the candidates that survive.

A good framework does a few things:

Brings multiple perspectives in – team members, stakeholders, and sometimes customers.

Produces results you can act on, not just a vague sense that several things matter.

Connects individual features back to the company's real goals.

Removes the noise. Ideas that don't hold up under scrutiny should fall away cleanly.

Prioritization is only one piece. You also need to communicate the "why" behind your decisions across the whole organization – which is the harder part.

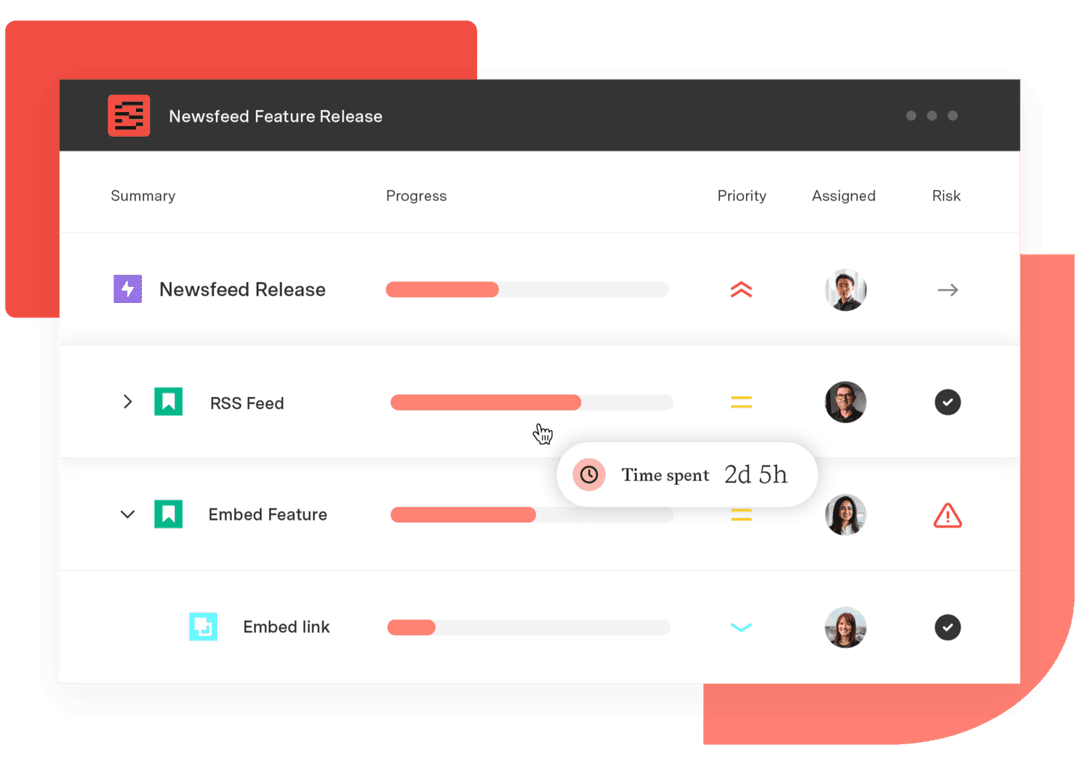

That's where an end-to-end roadmapping tool like Strategic Roadmaps can help. With it, you can:

Capture, manage, and analyze customer feedback in one organized place

Create a backlog of customer-validated product ideas

Surface the best ideas using built-in prioritization templates

Commit to ideas by promoting them to your roadmaps

Sign up for a demo

Request Demo