How to build better minimum viable products and features with user journey mapping

Tempo Team

Key Takeaways

Teams often confuse an MVP with a rough draft of the finished product they already have sketched, which is why so many MVPs ship with scope the assumption never justified.

User journey mapping belongs before the MVP scope decision. It surfaces which pain points deserve the first release and which features can wait.

Dropbox, Buffer, Airbnb, and Zappos all validated demand before shipping working software. Validation before building is the baseline at any stage, including mature teams.

An MVP, or minimum viable product, is the leanest build that tests one single specific assumption with real users.

"Minimum" carries a lot of meaning in the term "mimimum viable product." It tends to get ground down into dust.

What you ought to be doing is pairing MVP user journey mapping with real scope discipline. You'll chart the user's actual path to their goal, then scope the build tightly around the single moment of highest friction on that path.

Journey maps expose where people abandon the product or build a workaround. Then, the MVP removes that specific friction with the smallest build that can honestly test the fix.

To hold the line on that scope, teams have to commit ahead of time what metric would count as validation, and what number would count as a kill. They also agree ahead of time that "while we're in there" requests get written down and deferred. Not absorbed. Without those agreements in place before the first design review, the MVP gets taken over by various stakeholders and "minimum" vanishes under all the added surface area.

What "viable" actually means in product development

In plain language, viable means something works as intended. In product development, the definition is narrower: a viable product is one customers are willing to use regularly because it solves a specific problem they have.

Worth noting: Viable doesn't mean "willing to pay for it." It means "willing to use it consistently because it solves something real." That distinction matters when you're evaluating MVP success.

Why build an MVP before a finished product?

Before serious development work starts, PMs use MVPs to validate the product idea and reduce risk. Instead of going to market with a heavily developed product, they ship a bare-bones version to test whether target users see value in it.

MVPs serve three purposes. Validation: Real user behavior tells you whether your assumptions about the problem were correct. Focus: Building only the core feature set forces the team to decide what actually matters, which protects against the feature trap – when a product tries to do everything and ends up doing nothing well. And progress toward product/market fit: The metrics from real MVP users should feed directly into the improvements.

How to use user journey mapping to define your MVP

User journey mapping is a method for discovering which problems are actually worth solving. UX designers, marketers, and product managers use it to chart every action a user takes to reach a goal.

User story mapping is a related practice that connects user stories to outcomes and releases – useful when you're sequencing work across iterations. (For a detailed walkthrough, see Atlassian's user story mapping guide.)

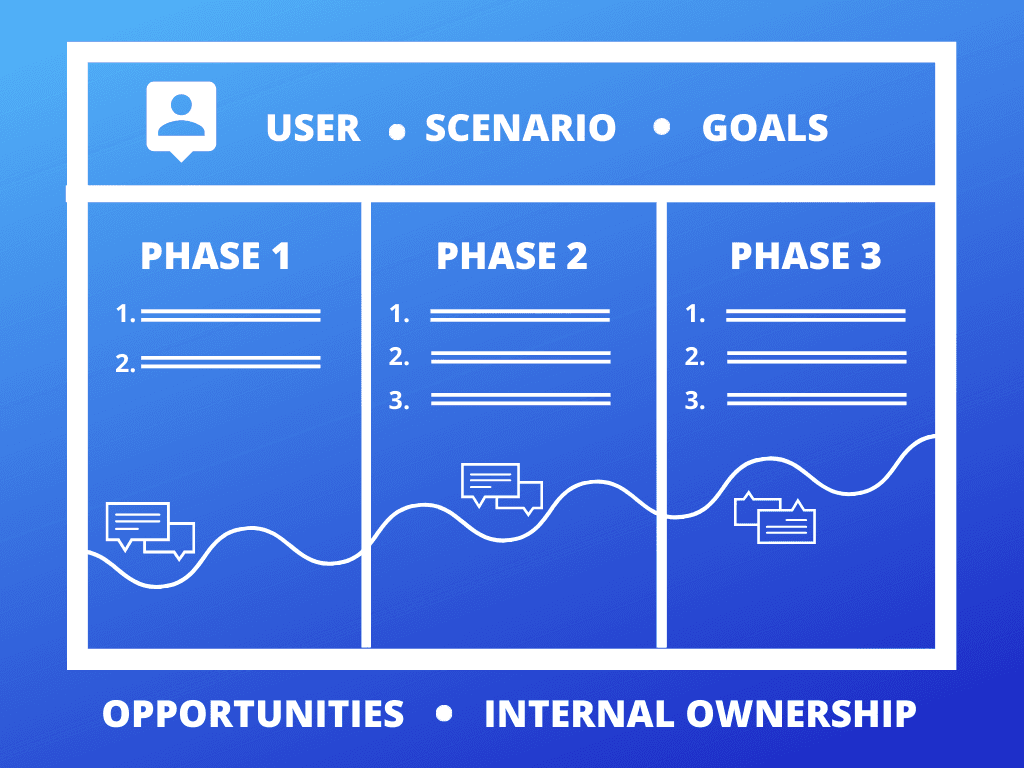

What a user journey map looks like depends on the product and the scope you're focused on. The general process: define your scope – the full end-to-end user experience, or a specific feature or interaction. Define the user, scenario, and goal. What does a successfully completed goal look like? What expectations does the user bring?

Then map actions and activities – write down the steps and phases of the journey needed to complete the experience, including pain points and obstacles. Look for opportunities: which pain points could become feature ideas?

Finally, prioritize and assign. Decide which features to build first and who owns the work.

The output is a ranked view of user problems, which is the right starting point for any MVP decision.

Validate your idea before you build the MVP

Before shipping an MVP, most experienced PMs run a proof of concept to confirm there's a customer base worth building for. Common approaches: A/B tests on a landing page, demo videos, crowdfunding campaigns.

Two examples worth studying.

Dropbox: Drew Houston posted a demo video to Hacker News showing an early walkthrough of the product concept. The comments gave immediate feedback. A later, more polished demo video drove their beta waitlist from 5,000 to 75,000 signups.

Buffer: Their simple landing page explained how Buffer would help users schedule tweets and collected emails to validate interest. They later added a pricing page to validate that people would pay.

Does money equal validation? Mostly. If users will click a "pay" button or enter credit card information, you have strong signal. But payment validation and user research aren't the same thing. Payment tells you there's a market, not what the product should actually do.

What belongs in your minimum viable product?

Think of an MVP as the leanest possible build that lets you test your core assumption with real people.

Start by distilling the user problem and proposed solution to their most basic forms. Many organizations use Toyota's 5 Whys approach to strip a problem down to its root cause. Once you have that, narrow to the features that solve that one problem. Nothing else belongs in the MVP.

The type: entry-hyperlink id: 1HbMRGveH1p8AEb8oby7ER method is one reliable framework for sorting a feature list: must-haves, should-haves, could-haves, won't-haves. Must-haves are the only features that belong in an MVP. Everything else is contingent on time, resources, or impact on user satisfaction.

You can also validate features with data before you build. A/B testing a landing page with four features shown to one group and five to another tells you whether that fifth feature is actually a must-have – without committing any engineering time. Keep tests isolated to one variable at a time so you can read the results.

Here's a useful test for a finished MVP: If you never improved it again, would it still be useful to the people it's built for? If yes, you have an MVP. If no, you have a prototype.

Henrik Kniberg's well-known "Start with the skateboard" diagram illustrates this clearly. Products that are partially built don't validate anything. Users need something functional from the first iteration – even if it's a skateboard, not a car – to give you real feedback.

Real-world MVP formats: Concierge and Wizard of Oz

After deciding what to include in your MVP, there are several ways to package and ship it.

Spotify is a classic product-led example. To validate a bufferless streaming experience, they built a simple desktop app with playlists and basic playback controls, then put it in front of friends and family. The feedback confirmed two things: People would stream music rather than own it, and the technology worked. That was enough to convince music labels and investors.

Airbnb (2007) is the canonical concierge MVP. During a design conference, the co-founders listed their own San Francisco apartment on a bare-bones website, set up air mattresses, and offered breakfast. Three paying guests. That's all it took to validate the core assumption: People would pay to stay in a stranger's home rather than a hotel.

Zappos used the Wizard of Oz format. The website looked like an automated e-commerce experience, but every order was fulfilled manually – Zappos employees physically went to stores, bought the shoes, and shipped them. The user experience felt automated. The team learned whether people would actually buy shoes online without committing to inventory or warehouse infrastructure.

How to learn from your MVP and decide what to build next

An MVP isn't a one-time event. There's no final version of a product. Building, measuring, and learning is the core of any agile product practice, and what you learn after your MVP is in users' hands will either confirm your direction or redirect it.

Before you ship, define your success metrics. The right question: "Which metrics would move if we're solving the right user problem?" Feature-flow analysis to confirm which features users perceive as most valuable. Repeat usage rates as a proxy for real problem-solving. Concrete thresholds, like 10,000 weekly active users or $10,000/month in signups.

Based on those metrics, decide whether there's enough signal to keep going. If the product is getting traction, repeat the feedback loop. If not, customer feedback is what tells you how to pivot – refine the hypothesis and test again.

Following an iterative process – or pivoting – is a normal part of the MVP cycle. Not a sign of failure. Your team now knows what doesn't work and can course-correct with that knowledge.

Anyone can ship an MVP. The PMs who get good results are the ones who actually listen to what the data says and change course based on it.

For planning what comes after your MVP, Tempo's Strategic Roadmaps integrates directly with Jira, letting you build roadmaps that stay connected to the real work in progress rather than becoming a static document nobody updates.

Related reading: Idea development: Turn concepts into actionable plans | Agile story mapping

Sign up for a demo

Request Demo